Monitoring of IIS Anycast

In January 2017 The Swedish Internet Foundation started a new service "IIS Anycast". We offer our registrars a secondary DNS. We do this in cooperation with CIRA (the Canadian ccTLD Registry). Our secondary DNS is a DNS anycast network.

Anycast (Wikipedia) is a technology where DNS servers in several data centers all over the world have the same ip address. When done right this results in your DNS queries being answered by a server much closer to you and therefor much faster answers. CIRA has done a really great job building the anycast network. A great deal of work has gone into planning the networking and peering of all anycast nodes. So in theory the new The Swedish Internet Foundation Anycast service should improve query times and make DNS more reliable.

"In theory there is no difference between theory and practice. In practice there is."

After some evaluation I settled on the following technology stack

As usual I started a new virtual machine with Ubuntu 16.04 LTS as operating system. It came as a surprise to me that all three tools were already packaged for Ubuntu. Unfortunately all of them were very old versions. The next decision was to run updated version in a docker container

It took me actually some time to realize that Docker is a good fit for InfluxDB and Grafana. But because we wanted to monitor even IPv6 servers Docker was not a good fit for Telegraf.

If you have worked with Docker you know that starting Docker containers is really easy, especially if you take ready made containers from Docker Hub. But to make the management of the application easier you have to make sure your containers do not store any valueable data in the container. Containers should be replaceable at any time. This implies that configuration and data is not stored inside the container, but in the host file system. Just as a side note: this makes backup much easier! I did choose the following containers from docker hub

My first tests were to start the containers just from the command line. Then I wrote a small bash script because all command line parameters were hard to remember. I even modified the script to first run docker pull to update to the latest container version. Unfortunately this would expose all my network ports to the host. Of course I could have exposed the ports only to 127.0.0.1 but that would have prevented the other containers to reach the ports, as 127.0.0.1 inside the container is not the same interface as 127.0.0.1 of the host. This required me to run a firewall infront of my containers. I started looking into alternatives.

Please meet docker-compose. Thsi will build a small network of docker containers. All of which can talk to each other by the container name, without exposing any ports to the outside. To install docker-compose I ran the following commands, copied from the docker-compose documentation

[prism lang="markup"]curl -L "https://github.com/docker/compose/releases/download/1.11.2/docker-compose-$(uname -s)-$(uname -m)" -o /usr/local/bin/docker-compose

chmod +x /usr/local/bin/docker-compose[/prism]

Here is my final docker-compose.yml

[prism lang="markup"]version: '2'

services:

influxdb:

image: influxdb:alpine

volumes:

- /etc/letsencrypt:/etc/letsencrypt

- /home/ulrich/appdata/influxdb/lib:/var/lib/influxdb

- /home/ulrich/appdata/influxdb/influxdb.conf:/etc/influxdb/influxdb.conf:ro

ports:

- "127.0.0.1:8086:8086"

restart: unless-stopped

grafana:

image: grafana/grafana:latest

volumes:

- /home/ulrich/appdata/grafana/lib:/var/lib/grafana

- /home/ulrich/appdata/grafana/etc:/etc/grafana

environment:

- GF_SECURITY_ADMIN_PASSWORD=CHANGEME

- GF_SERVER_ROOT_URL=https://example.com

links:

- influxdb

depends_on:

- influxdb

ports:

- "127.0.0.1:3000:3000"

restart: unless-stopped[/prism]

Now that InfluxDB and Grafana are up and running there is only the problem of configuration and the actual monitoring left. 🙂

For monitoring I choose telegraf, mainly because it plays really well with InfluxDB and because of the many preexisting testing tools in telegraf. In my case the dns_query input plugin seemed to to what I want. We had several domains already served by the IIS Anycast and I used one of them for taking timing information against both IIS Anycast clouds. So in total for measurements (2 clouds on IPv4 and IPv6). The reason I choose to run telegraf directly on my machine instead of running it in a docker container is that docker still supports IPv6 poorly.

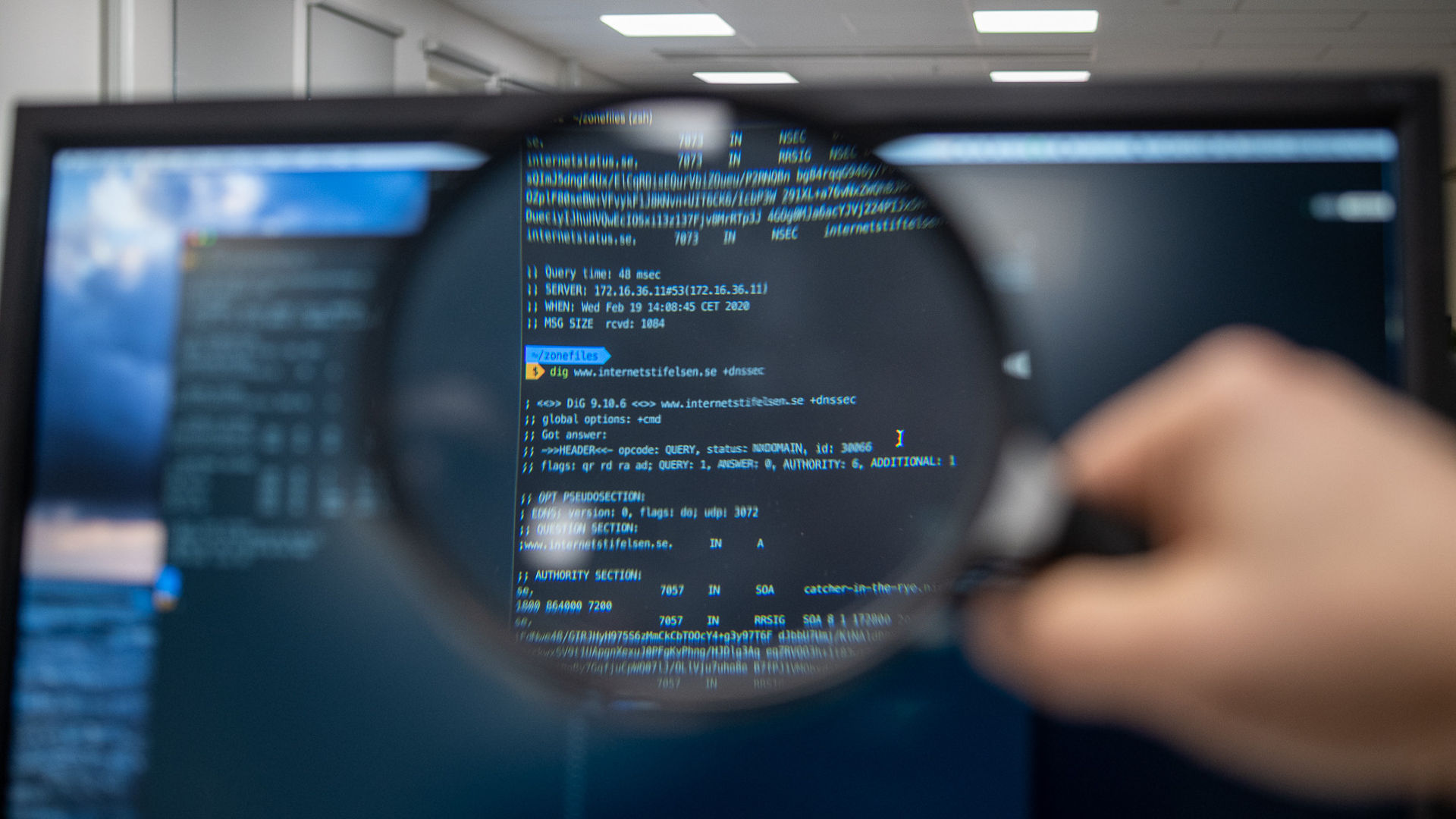

After some time I found that my first telegraf.conf did not really match my needs. For one, my results did not contain enough information. I needed to add more tags. The other problem is more connected to anycast. In an anycast cloud you almost always get an answer, but you do not know who is answering. So is the answer slow because the server is slow or because you contacted an instance on the other side of the globe? In our case the dns servers are configured to answer with location information if asked for hostname.bind

[prism lang="markup"]cray-xmp:~ superuser$ dig @ns1.anycast.iis.se hostname.bind txt chaos

; <<>> DiG 9.8.3-P1 <<>> @ns1.anycast.iis.se hostname.bind txt chaos

; (2 servers found)

;; global options: +cmd

;; Got answer:

;; ->>HEADER< ;; flags: qr aa rd; QUERY: 1, ANSWER: 1, AUTHORITY: 1, ADDITIONAL: 0

;; WARNING: recursion requested but not available

;; QUESTION SECTION:

;hostname.bind. CH TXT

;; ANSWER SECTION:

hostname.bind. 0 CH TXT "LHR1"

;; AUTHORITY SECTION:

hostname.bind. 0 CH NS hostname.bind.

;; Query time: 39 msec

;; SERVER: 2620:10a:80eb::40#53(2620:10a:80eb::40)

;; MSG SIZE rcvd: 62[/prism]

So I wrote a new plugin to telegraf which sends exactly this query and reports not only the timing but also the answer to InfluxDB. (Can be found at https://github.com/ulrichwisser/telegraf)

My new telegraf.conf looks like this:

[prism lang="markup"]# Global tags can be specified here in key="value" format.

[global_tags]

dc = "datacenter-1"

# Configuration for telegraf agent

[agent]

interval = "10s"

round_interval = true

metric_batch_size = 1000

metric_buffer_limit = 30000

collection_jitter = "0s"

flush_jitter = "0s"

precision = ""

debug = false

quiet = false

logfile = ""

hostname = ""

omit_hostname = false

[[outputs.influxdb]]

urls = ["https://127.0.0.1:8086"]

database = "DATABASENAME"

retention_policy = ""

write_consistency = "any"

timeout = "5s"

username = "telegraf"

password = "SeCrEt"

[[inputs.dns_hostname_bind]]

servers = ["162.219.54.128"]

[inputs.dns_hostname_bind.tags]

cloud="Cloud1"

customer="IIS"

IP="v4"

[[inputs.dns_hostname_bind]]

servers = ["2620:10a:80eb::40"]

[inputs.dns_hostname_bind.tags]

cloud="Cloud1"

customer="IIS"

IP="v6"

[[inputs.dns_hostname_bind]]

servers = ["162.219.55.128"]

[inputs.dns_hostname_bind.tags]

cloud="Cloud2"

customer="IIS"

IP="v4"

[[inputs.dns_hostname_bind]]

servers = ["2620:10a:80ec::40"]

[inputs.dns_hostname_bind.tags]

cloud="Cloud2"

customer="IIS"

IP="v6"[/prism]

After connecting Grafana to InfluxDB. I was able to access all data and configure a monitoring dashboard like this.

As you can see there are a few timing variances, but overall both anycast clouds answer fast to queries.

Since we started the monitoring we have had a few times where the instances in Stockholm or London wouldn't answer. We were able to see the increased timing and get information from which instances the answeres were returned.

Overall this Proof of Concept matches our needs. Of course, this is not a full scale monitoring of both anycast clouds, but that was never our goal. Our goal was to see if the anycast clouds work and get some impression of how well it works.