Lessons learned from the .SE algorithm rollover

The Swedish Internet Foundation was the first TLD to sign their zone, but eventually you come to a point where an overhaul is needed. This is what we learned from that process.

In 2005, The Swedish Internet Foundation was the first TLD to sign their zone. It was done in order to increase both awareness and usage of DNSSEC.

Being first out is always a bit exciting, but once a working implementation is in place it's easy to let it fall into neglect. "If it's not broken, don't try to fix it", as the saying goes. Sooner or later, however, you get to a point where you have to overhaul your system. The Swedish Internet Foundation reached that point when the decision was made to switch algorithm used to sign the .se zone, from SHA1 to SHA256. There was no way of doing it automatically in OpenDNSSEC 1.4.3, the old but reliable version we were currently running.

Initially we planned to include OpenDNSSEC (henceforth ODS) as a part of the upcoming project to upgrade of the whole zone generation environment. We quickly realised that it would be unworkable, as the that project would be massively time consuming and we wanted the algorithm rollover off the ground as soon as possible. So, we decided to run the upgrade of OpenDNSSEC as a separate project.

Starting out, we focussed on the ODS software upgrade, purposefully excluding OS and any other parts of the existing system, in order to easily be able to isolate and identify possible problems. For this we used virtual machines, which were handily set up using Virtualbox and Vagrant. These virtual machines were installed with the same version of OS and ODS, along with necessary dependencies, as the server in the current production environment was running. We did, however, choose to use SoftHSM instead of our regular HSM, since it saved us quite a bit of work and would not (theoretically) make any difference at this stage. In order to verify that the existing keys were picked up by ODS and properly rolled as needed, we used a modified policy with greatly reduced TTL values.

Setting up ODS was uncomplicated for both version 1.4.3 and 2.1.0 (which was the latest version at the time). Of course, as soon as we tried to migrate from one version to the other we immediately ran into some problems. We're using an sqlite3 kasp database and the database migration script turned out to contain a small bug.

-

(/convert_sqlite) Error: near line 277: NOT NULL constraint failed: policy.keysPurgeAfter |

-

Fortunately, NL Netlabs was quick to help us out with a patch and the bug was then permanently fixed in the following release. This got us as far as to the last step of the migration before things went sideways again. During this last step the script "ods-migrate" is supposed to extract the id of the keys from zone file and write them to the database, which failed to happen. Thankfully the logs were informative, giving us no less than two error messages.

-

/etc/opendnssec/conf.xml:47: element Interval: Relax-NG validity error : Element Enforcer has extra content: IntervalSegmentation fault (core dumped) |

-

It turned out not to be so much a bug as an error on encountering a deprecated XML tag in the conf.xml file and the problem was easily resolved by simply removing the tag.

So far everything had worked out fairly well. The next step was testing the actual algorithm rollover. Since we're running a physical HSM in production and generate all keys for it manually, we of course wanted to emulate this as closely as possible with SoftHSM. At present we were running ODS 2.1.1 which, it turned out, completely ignored the tag <ManualKeyGeneration/>. This in combination with a default key generation period of one year and the short key lifetime set by our lab policy resulted in ODS, by its own volition, creating a rather impressive amount of keys and then proceeding to make the algorithm rollover. While the rollover worked like a charm, which in itself looked very promising, we didn't get to test ODS's behaviour when it could not automatically create keys. We tried various creative workarounds, that unfortunately turned out to be did-not-workarounds. This put us on hold for a bit since we were reluctant to proceed without this part properly tested. Again, NL Netlabs were helpfull and when ODS 2.1.3. was released the bug was fixed and we were back in business.

Now that all the kinks were worked out, we tried the migration and algorithm rollover a few times using virtual servers, just to get comfortable with all the steps of the process. The next part of the preparation was upgrading our stage environment, whose setup corresponds to that of the production environment up to and including a (separate) physical HSM. So far we'd only worked on upgrading the ODS part of the system. Now, we needed to be able to reliably set up a whole functional production environment. Since the current one is set up and maintained using puppet, a lot of work went into updating scripts and packages. Even though the modifications required weren't all that extensive, getting everything ready for a dress rehearsal took a bit longer than expected. An untimely breakdown of the HSM in our stand-by environment didn't help matters. Granted, it was only one of two in a cluster, but we made the call to prioritise a fully functional backup to the production system over running tests in the stage environment. It would take a few days for a new HSM to be delivered, so we replaced the broken one with another from the stage HSM cluster in the meantime.

When all hard and software was finally in place for the last tests, we were on a tight schedule, even if we'd had the sense to work on setting up the actual new production environment in parallel. What we didn’t need was for something else to go wrong, so of course something did. In the HSM module, there were two different repositories. At some point in the past the name of the repository holding the keys had been changed. All new ZSKs were located in the new repository, but the KSK was still left in the old one. Since everything was working anyway, this peculiarity had gone unnoticed. The migration scripts were understandably not able to handle this scenario, which presented a bit of a problem. The initial idea was to let all previously generated keys carry over to the new setup, but now we had to revise the plan a bit. Instead, we opted to start with a fresh database and import only the ZSK and KSK currently in use. Thankfully, this was fairly uncomplicated and could be accomplished with information from the man pages and only a minimum of experimentation. In retrospect, we should probably have gone for this solution from the start. Going the other, as it turned out more complicated, route was still a useful exercise, however, and we did find a couple of software bugs, so it wasn’t a complete waste of time.

Having dealt with the repository mismatch, the only further problem we encountered was a slightly too narrow margin on both RAM and disc space. During the algorithm rollover the se zone grows about 40% in size, due to the double signatures. The increase in size affected both BIND and ODS and caused the RAM to hit the ceiling during signing. This issue was easily remedied and further tests went through without any sort of problems or unexpected behaviour from either hard or software. In light of this, we went on to upgrade RAM and disk space in our production environment as well as on our distribution nodes. We also contacted all of our secondary name server providers to verify that the increased zone file size would not be a problem, just to be sure. All of them assured us that it wouldn't.

Finally, everything was prepared. The rollover in the stage environment had been a success, the new production environment was up and running, new keys had been generated on the HSM and we had a thoroughly worked out and documented fallback plan. Despite a couple of mishaps, and the various stages of the upgrade taking longer than expected, we could even start the algorithm rollover in the production environment on schedule. We were all set. What could possibly go wrong?

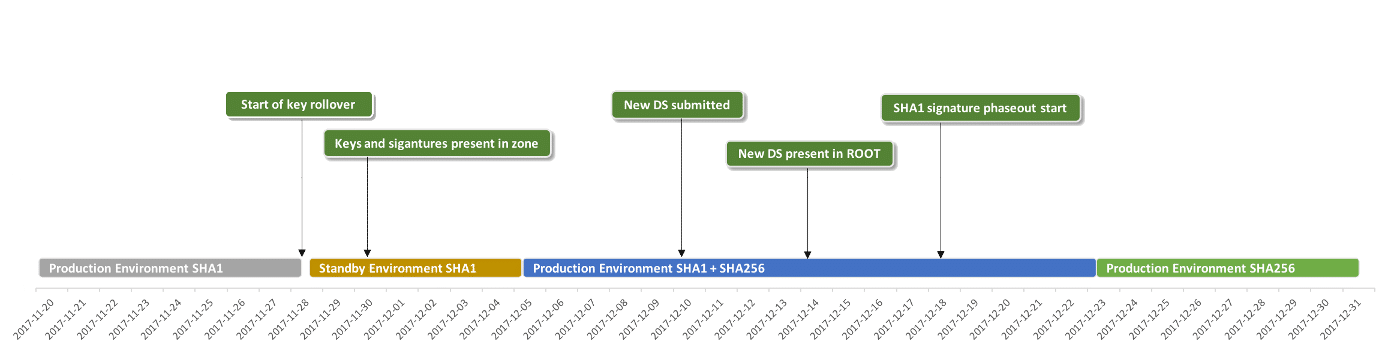

At first, everything seemed to go according to plan. ODS had no trouble picking up the new keys and nothing out of the ordinary appeared in the log files. When the next zone signing was done, however, no new keys were present in the zone and no new signatures. We expected only a small delay since in every test we made in the stage environment, keys and signatures had shown up either at the next resigning or the one following one. This time, however, the keys did not show up even after three consecutive re-signings. At this point we either had to take a chance and distribute the zone in its current form, or we switch over to the stand-by environment that had been generating and signing zones in parallel, using only the old keys.

Even if nothing was broken as such, we decided to not distribute the zone until all expected keys and signatures were present. That left the option to roll back distribution to the stand-by servers. The switch was uncomplicated and went without incident. While investigating the delay in the production environment we kept an eye on the zone at every signing, to see whether the keys and signatures had shown up, which they did after almost two days. Just to be on the safe side, we let the stand-by servers distribute the zone for a bit longer while we ran extensive tests on the new double-signed zone. When we were satisfied that everything was working as expected, zone distribution was switched back to the new production servers. (Despite our efforts, even with the help from seasoned ODS developers, the cause of the key and signature delay is still unknown.)

As the last step of the rollover, a new DS record was submitted to IANA, who were quite expedient in replacing the old one in the root zone. Shortly thereafter, the old SHA1 signatures started to be phased out in order of expiring TTL. This went on at a rate of about 70k signatures every two hours, with a slight decline towards the end. Finally, at noon on the 23 of December, the last SHA1 signature was gone from the se zone, and by that, the rollover was complete.

Rollover timeline:

All in all everything turned out well. In addition to our own monitoring, SIDNlabs did a great job keeping an external eye on us over the entire course of the rollover, using the same infrastructure intended to monitor the root KSK roll over (https://rootcanary.org). At every stage, SIDNlabs measurements showed that resolvers around the world picked up our new resource records as expected. Our schedule was deliberately planed with longer waiting periods, since wanted to make sure even slow caches had a chance to catch up. We also deliberately avoided making any changes over the weekends. SIDNlabs measurements gave us the confidence to continue the rollover on schedule, despite the small bumps in the road. Please read more here about the work they did.